Once all components are deployed we should verify the following: However, if the application reading/writing to our ES cluster is deployed within the cluster then the Elasticsearch service can be accessed by. The annotation “/load-balancer-type: Internal” ensures this. The purpose of the service deployed here is to access the ES Cluster from outside the Kubernetes cluster but still internal to our subnet. We will use the following manifest to create the Deployment and External Service for the Client Nodes: We can also set a flag to allow volume expansion on the fly. This can be done by specifying the volume type when creating the storage class. It is important to format the persistent volume before attaching it to the pod. The headless service in the case of data nodes provides stable network identities to the nodes and also helps with the data transfer among them.

We will use the following manifest to deploy Stateful Set and Headless Service for Data Nodes: apiVersion: v1 The headless service named elasticsearch-discovery is set by default as an env variable in the docker image and is used for discovery among the nodes. It can be seen above that the es-master pod named es-master-594b58b86c-bj7g7 was elected as the leader and the other 2 pods were added to the cluster. root$ kubectl -n elasticsearch logs -f po/es-master-594b58b86c-9jkj2 | grep ClusterApplierService When following the logs of the master-nodes, you will also see when new data and client nodes are added.

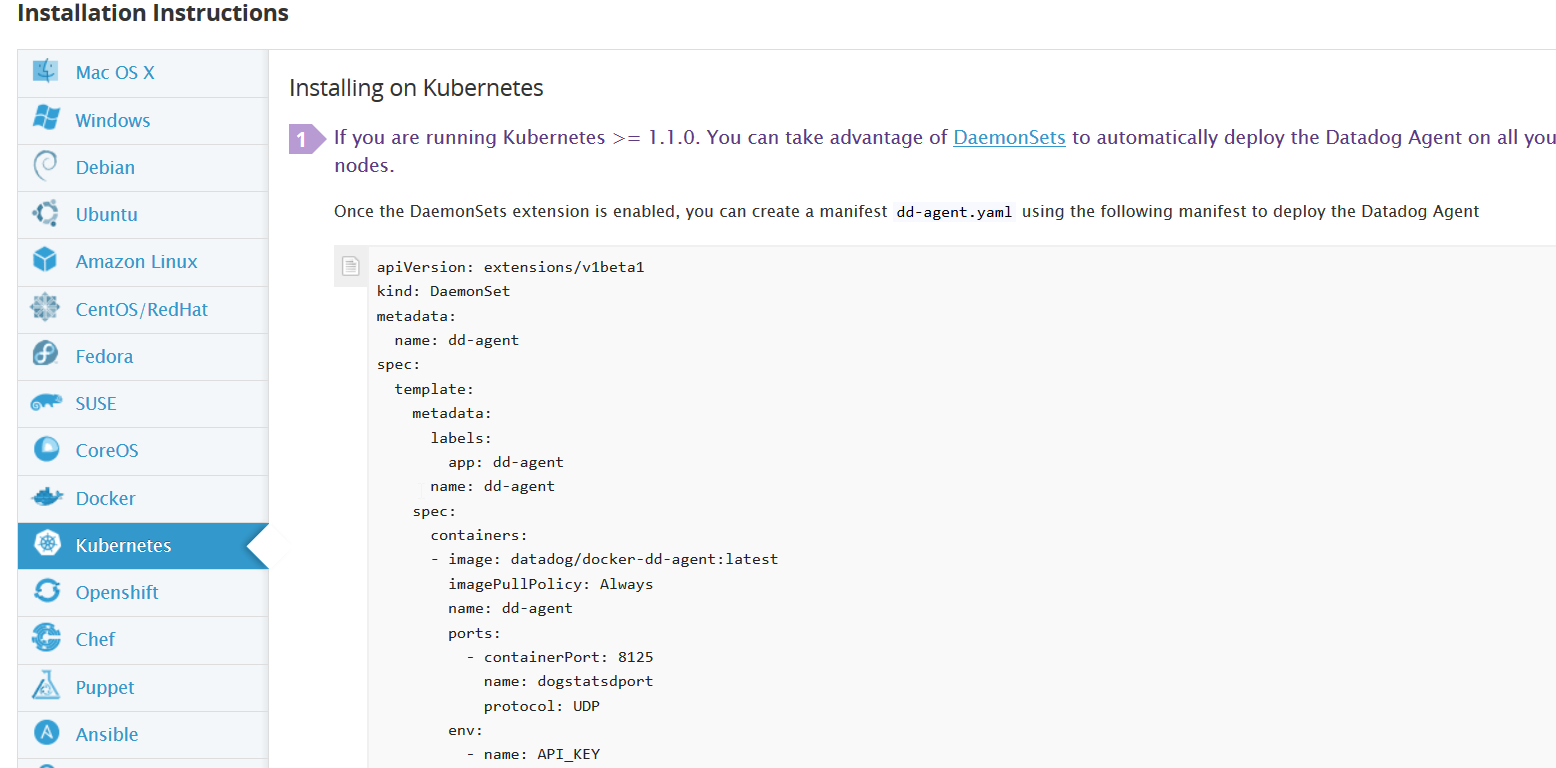

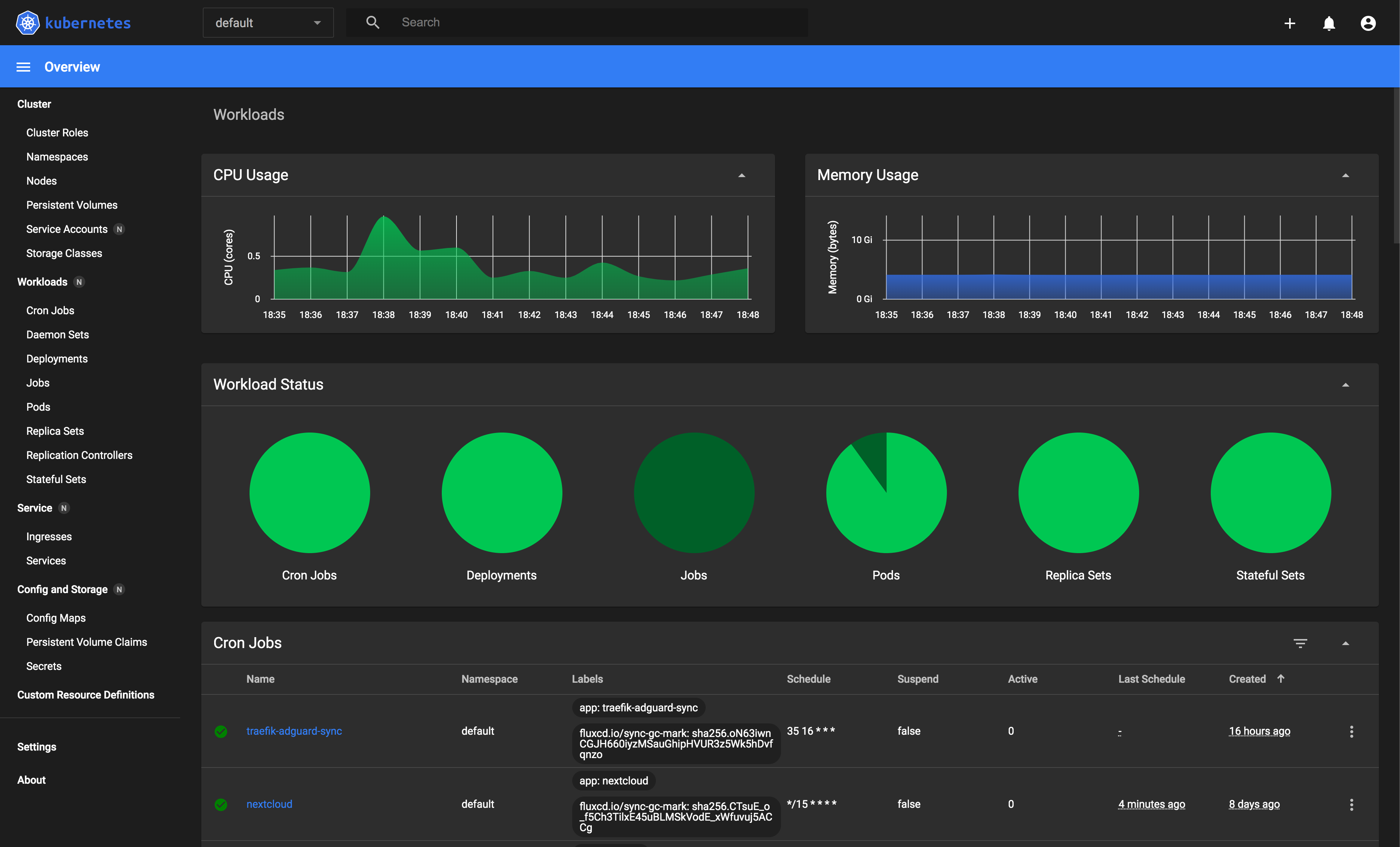

This is when the master-node pods choose which one is the leader of the group. If you follow the logs of any of the master-node pods, you will witness the master election among them. Image: quay.io/pires/docker-elasticsearch-kubernetes:6.2.4 PreferredDuringSchedulingIgnoredDuringExecution: Let's jump right at deploying these services to our GKE cluster.Ģ.1 Deployment and Headless Service for Master Nodesĭeploy the following manifest to create master nodes and the headless service: apiVersion: v1 Setting correct Pod-AntiAffinity policies among similar pods in order to ensure HA if a worker node fails.In case of 3 masters we have set it as 2. Setting NUMBER_OF_MASTERS (to avoid split-brain problem) env variable for master deployment.HPA (Horizontal Pod Auto-scaler) deployed for Client Nodes to enable auto-scaling under high load.Kibana and ElasticHQ Pods are deployed as Replica Sets with Services accessible outside the Kubernetes cluster but still internal to your Subnetwork (not publicly exposed unless otherwise required).Elasticsearch Client Node Pods are deployed as a Replica Set with an internal service which will allow access to the Data Nodes for R/W requests.Elasticsearch Master Node Pods are deployed as a Replica Set with a headless service which will help in Auto-discovery.Elasticsearch Data Node Pods are deployed as a Stateful Set with a headless service to provide Stable Network Identities.The Elasticsearch setup will be extremely scalable and fault tolerant. We will be using Elasticsearch as the logging backend for this. This is the first post of a 2 part series where we will set-up production grade Kubernetes logging for applications deployed in the cluster and the cluster itself. Using ES and Kibana we can search through logs with easy queries and filter by fields.Basic Infrastructure for ELk 1.Introduction Labels and JSON log fields are properly named and parsed. Kubernetes logs autodiscovery and JSON decoding provide very good visibility into log stream. Everything happens before line filtering, multiline, and JSON decoding, so this input can be used in combination with those settings. This input searches for container logs under the given path, and parse them into common message lines, extracting timestamps too. Use the container input to read containers log files. Hints tell Filebeat how to get logs for the given container. As soon as the container starts, Filebeat will check if it contains any hints and launch the proper config for it. The hints system looks for hints in Kubernetes Pod annotations or Docker labels that have the prefix co.elastic.logs. Hints based autodiscoverįilebeat supports autodiscover based on hints from the provider. The Kubernetes autodiscover provider watches for Kubernetes nodes, pods, services to start, update, and stop.Īs well it recognise a lot of additional labels and statuses related to Kubernetes objects. Autodiscover allows you to track them and adapt settings as changes happen. When you run applications on containers, they become moving targets to the monitoring system. var/log/containers/*$.log What’s so cool about above configuration Filebeat Autodiscover

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed